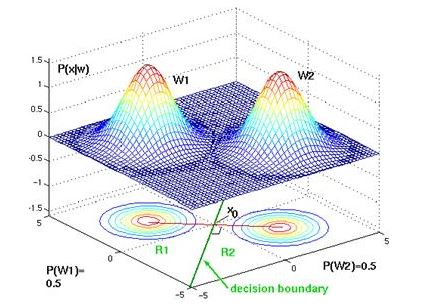

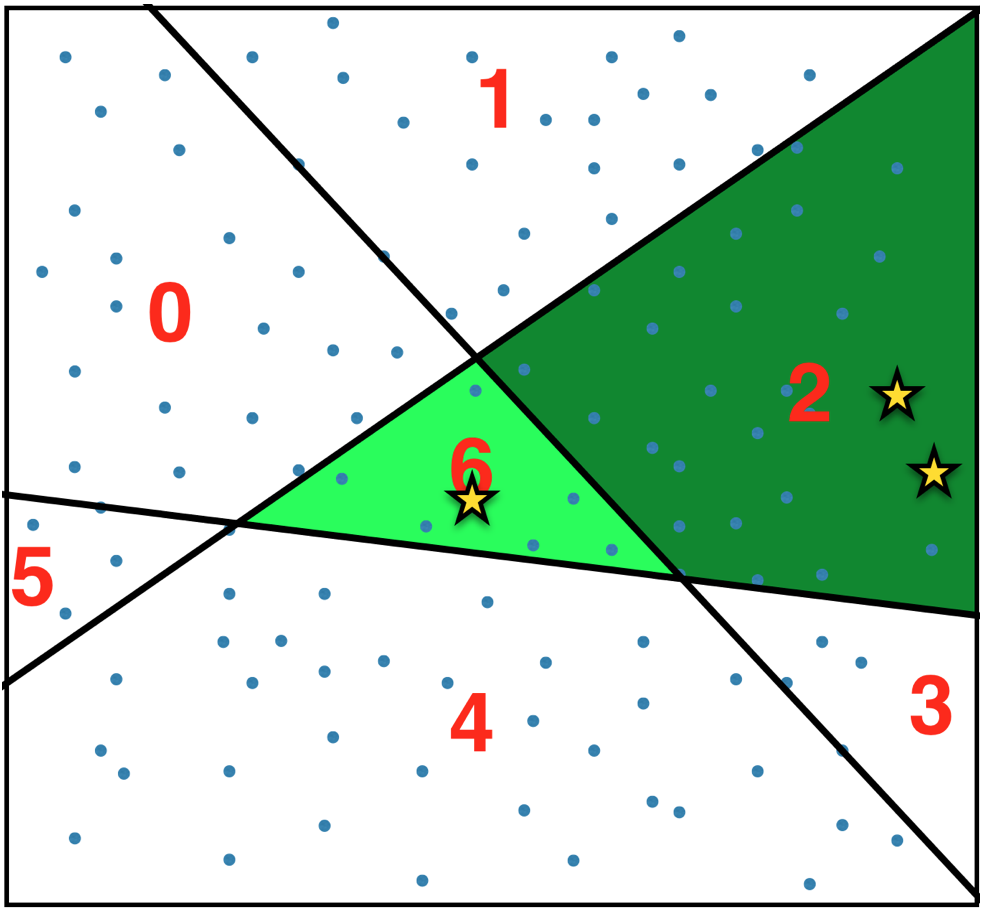

If the data we train on is not ‘diverse’, the overall topology of the model will generalize poorly to new instances. The model can predict a value for any possible combination of inputs in our feature space. Decision Boundaries are not only confined to just the data points that we have provided, but also they span through the entire feature space we trained on. In order to map predicted values to probabilities, we use the Sigmoid function.Ī decision boundary, is a surface that separates data points belonging to different class lables. So, line with 0.5 is called the decision boundary. 2 we would classify the observation belongs to class B. 7, we would classify this observation belongs to class A. In order to map this to a discrete class (A/B), we select a threshold value or tipping point above which we will classify values into class A and below which we classify values into class B. Our current prediction function returns a probability score between 0 and 1. S(z) = Output between 0 and 1 (probability estimate).So, all of the points on one side of the boundary shall have all the datapoints belong to class A and all of the points on one side of the boundary shall have all the datapoints belong to class B. Let’s suppose we define a line that describes the decision boundary. The goal of logistic regression, is to figure out some way to split the datapoints to have an accurate prediction of a given observation’s class using the information present in the features. Let’s take an example of a Logistic Regression. On one side a decision boundary, a datapoints is more likely to be called as class A - on the other side of the boundary, it’s more likely to be called as class B.

While training a classifier on a dataset, using a specific classification algorithm, it is required to define a set of hyper-planes, called Decision Boundary, that separates the data points into specific classes, where the algorithm switches from one class to another. So, lets start WHAT IS DECISION BOUNDARY?

Hyperplan dense how to#

There are many debates on how to decide the best classifier. DECISION BOUNDARY FOR CLASSIFIERS: AN INTRODUCTION